The Model Context Protocol (MCP) might be the least sexy name in AI — but it’s about to change how everything connects.

The Plug-and-Play Era of AI

Technology companies building new products and automations are realizing they need an AI‑first mindset. Because adoption is happening at lightning‑fast speed, we’re already seeing anti‑patterns emerge.

In a world without MCPs, every AI workflow has to manually integrate or develop tools from scratch. This creates tight coupling between components and reduces reusability. Sounds complicated?

Let’s make it concrete. Suppose you want to build an internal tool that empowers your customer‑success team to resolve issues without waiting days for an engineer.

In the past, you’d spend days wiring together your databases, log repositories, authorization servers, and ticketing system before the tool was even usable — and you’d be left with another system to maintain.

Scratch all that. Have the team install a chat‑based LLM, such as Claude Desktop, spin up MCP servers for the services they need (AWS CloudWatch, Auth0, Jira, GitHub…), and give them view‑only tokens. Done. They can start chatting immediately, and the LLM will orchestrate all the available tools to solve customer issues.

Modern reasoning‑capable LLMs can plan, proactively query logs, inspect the database, look for prior occurrences, and suggest solutions — all through natural language, with no extra code to maintain.

Okay, But What Is MCP?

MCP is an open protocol designed to let AI assistants access external tools and data in a standardized, secure way.

It was introduced by Anthropic in late 2024, and quickly got picked up by OpenAI, Microsoft, and basically everyone tired of rebuilding the same integration layer a thousand times.

At its core, MCP lets any LLM-powered app (like Claude, GPT, Copilot, or your custom bot) talk to any software system via a shared protocol — without you needing to reinvent the wheel each time.

MCP defines:

- What resources are available (documents, APIs, databases, etc.)

- What actions the AI can take (query a DB, send an email, run a script)

- How to talk to those resources (standardized, language-agnostic messaging)

It’s a new LLM-friendly protocol.

Why You Should Care (Especially If You’re a CTO Reading This at 1 AM)

Let’s cut to it: MCP saves you from AI integration hell.

Here’s what it fixes:

🧩 No more custom spaghetti code

Forget building one-off plugins for every tool. MCP lets you reuse prebuilt connectors — or write your own once and reuse them across AI systems.

🔍 Live, contextual, accurate answers

Your AI doesn’t have to hallucinate answers from frozen training data. It can go get the real stuff — right from your systems, at runtime.

🤖 Your AI becomes an actual assistant

Not just a chatbot. An AI that can do things — pull reports, update records, send messages, even chain multi-step workflows across systems. And you are in full control of what things should be read-only and what the AI can write to.

“MCP is like giving your AI the keys to your software kingdom — but with a smart lock on every door.”

A Quick Reality Check: What Does MCP Actually Do?

Let’s say you ask your AI:

“What were Q1 sales by region, and can you summarize it for our next pitch deck?”

Without MCP?

There goes a couple of hours pulling data manually, feeding in the LLM context window, getting results and hacking excel to build some usable charts.

With MCP?

- It queries your sales database (via an MCP server).

- It finds and pulls the relevant slide deck from your file repo.

- It analyzes the numbers, creates a visual, and even drafts a blurb for the deck.

All in one flow. All using natural language. Powered by MCP.

And if you don’t like the chart It used you can swap the MCP for a different charting solution and prompt again. Everything is composable and replaceable.

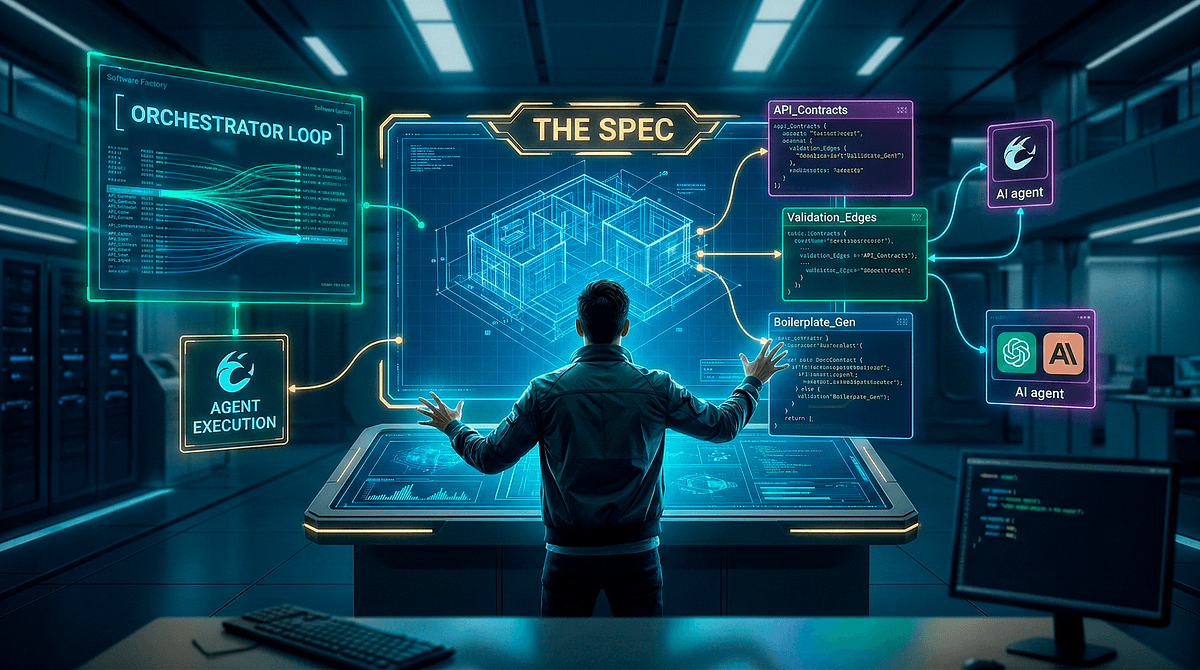

Understand, Plan, Execute

MCP doesn’t require you to define what actions your AI must take ahead of time. Instead, it exposes the resources and tools available, and the AI figures out how to use them based on what the user wants.

It’s like walking into a room and asking, “What can I do here?”

MCP replies, “Well, there’s a whiteboard, a coffee machine, and a database.”

Then your AI decides: “Cool, I’ll write a strategy doc, pull Q1 data, and caffeinate myself.”

MCP decouples interface from intent.

You describe what you want — and the AI determines how to get it done.

This is what turns a chatbot into an agent. Not just answering, but acting. Not hardcoded logic — intelligent delegation.

Is MCP a Buzzword? Or Are We Actually Doing This?

It’s real. And it’s happening fast.

- Anthropic? MCP is built into Claude by default.

- OpenAI? MCP is expected to be available on ChatGPT.

- Microsoft? Already pushing MCP in Copilot, with official SDKs.

There’s a growing open-source ecosystem of MCP servers (connectors for Slack, Google Drive, Postgres, Jira, you name it). You can build your own with official SDKs in Python, JavaScript, and more.

This isn’t a vendor land grab. It’s an open protocol.

“MCP is quietly becoming the lingua franca of AI integration — and soon, if your tool doesn’t speak MCP, it might not speak at all.”

Where is the catch?

You might be thinking, This sounds too good to be true. So let’s talk about where MCPs still fall short.

Ambiguity. If you connect two or more MCP servers that expose overlapping tools, the LLM won’t always know which one to call. It may ask for clarification or simply choose at random. The more MCPs you connect, the more often this happens, so only enable the services you truly need.

Hallucination. LLMs remain statistical prediction engines, which means they can fabricate answers. MCPs don’t change that reality. Avoid granting power‑user access to critical systems — you don’t want an AI sending a DROP DATABASE command.

Workflows still matter. MCPs shine in exploratory or loosely defined tasks, but when objectives are precise, less is more. A focused prompt and a minimal toolset usually outperform a kitchen‑sink approach.

TL;DR — Why This Matters

Here’s the cheat sheet:

✅ MCP lets AI assistants access real tools and data

✅ It solves the “every integration is bespoke” problem

✅ It’s model-neutral, secure, and scalable

✅ It’s turning LLMs into real software agents

✅ And yes, it’s already here

If you’re serious about building context-aware, action-ready AI apps — internally or for clients — you’ll want to keep MCP on your radar.

Next Up: We Pop the Hood

In Part 2 of this series, we’ll go deeper into how MCP actually works — with examples, diagrams, and zero soul-crushing YAML. You’ll see why this isn’t just “yet another protocol,” but the foundation for the next wave of AI automation.

Until then, go ahead and imagine your AI assistant plugging into your systems like it’s 2025.

Oh wait — it is.

Want the Full MCP Report?

We’ve done the research so you don’t have to.Download our in-depth MCP Strategy Report to learn:

- How MCP actually works (with diagrams and architecture breakdowns)

- Internal use cases for dev teams, analysts, and operations

- How to build AI-powered client solutions using MCP

- Security, performance, and ROI considerations

- Real-world examples and early adopter insights

👉 Grab the Document and start thinking about how MCP fits into your stack: https://forms.gle/C8XQgNg7MPPVYQ8V8No spam, no fluff — just the good stuff.