Introduction

Organizations typically use ledger functionality to maintain an accurate history of applications’ business-critical data. For example, to track the history of credits and debits in financial transactions, verify the data history of insurance claims, or trace an item’s movement in a supply chain network.

Ledger databases share some standard features:

- They store a complete history of changes to entities in an immutable way

- They offer a way to verify the data integrity

- They offer a way to query the data stored in the ledger conveniently

More often than not, ledger functionality is implemented using custom audit tables or audit trails in relational databases. However, this approach is time-consuming because it requires custom development and error-prone since relational databases are not inherently immutable. Hence, changes to the data are hard to track and verify.

Alternatively, blockchain can also be used as a ledger. Still, it adds complexity and overhead since it requires setting up an entire blockchain network with multiple nodes, manage its infrastructure, and require the nodes to validate each transaction before the record can be added to the ledger.

Amazon Quantum Ledger Database (or QLDB) is a serverless, fully managed ledger database service that provides immutable and cryptographically verifiable transaction log owned by a central trusted authority.

Amazon QLDB can be used to track every change in the data of an application. It maintains a complete and verifiable history of changes over time. It is a ledger database that simplifies keeping secure audit logs. With QLDB, the data change history is immutable. Each entry on the ledger can be cryptographically verified because it uses an immutable transactional log (the “journal”), which tracks all data changes from the application, maintaining a complete and verifiable history of changes over time.

A “Journal First” Database — How does QLDB work?

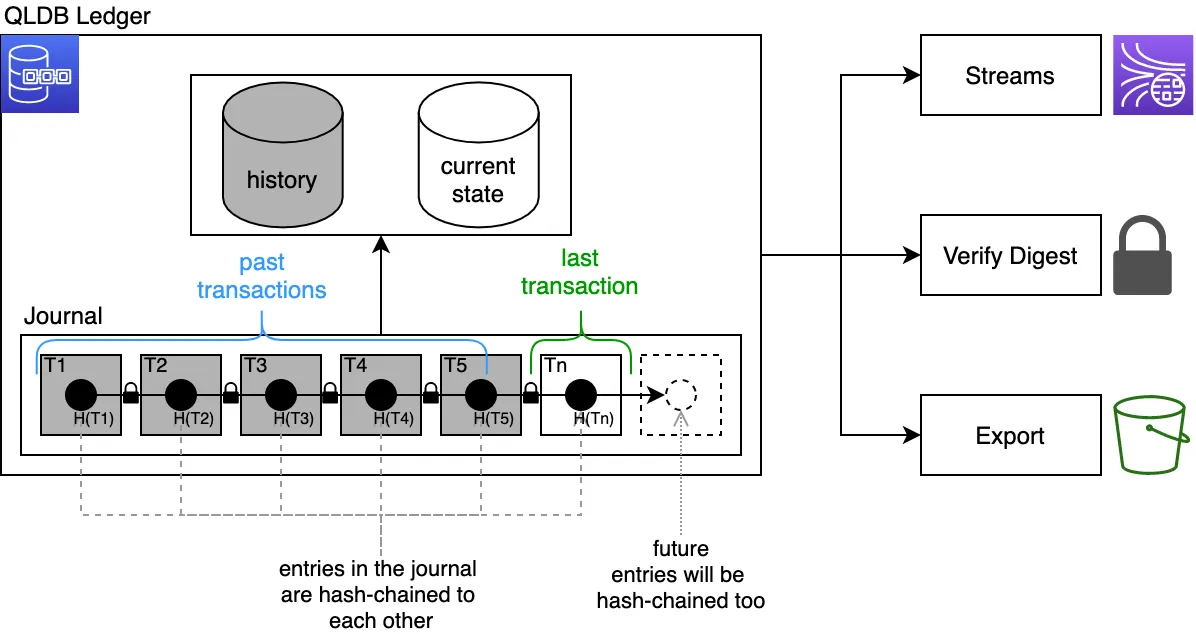

The critical component of Quantum Ledger is the Journal. Think of this journal as an immutable, append-only transaction log. Transactions are first recorded in the Journal in chronological order, which is the book’s primary entry. Then they’re transferred to the appropriate ledger, which is the second book entry. These two entries constitute a complete audit trail of every single change.

In Quantum Ledger, the database Journal is a first-class citizen and is externalized. Thus, no record can be inserted or updated without first going to the Journal, which contains committed transactions only.

A cryptographic SHA-256 digest is calculated for every transaction committed to the journal and stored as part of the transaction.

Also, each transaction’s hash in the Journal is chained with the hash from the previous transaction. So, at the same time, each transaction block is committed to the Journal; QLDB calculates a Merkle Tree with the Journal’s committed transaction hashes. So as transactions are committed to the Journal, a Ledger Digest is continuously updated, which is the root of the Merkle Tree, allowing for verifiability of the data integrity in the whole Ledger, since the digest represents the entire history of changes at that particular point in time, in which it was requested. Thus, you can take any record at any point in time and cryptographically verify it, proving that a document revision exists has not been altered in any way since it was first written.

Derived from this feature, Quantum Ledger provides queries representing the Ledger’s current state and the history of data in the Ledger.

There is a user’s view that represents the latest data in the Ledger, a system’s view that allows querying the system-generated metadata of the Ledger, and a history function that can be used to access the past versions of the data in the Ledger.

Some essential features that make QLDB appealing are:

- A SQL-like API for querying the data, using the PartiQL dialect.

- A flexible document data model to make it easy to store information schemaless.

- Full transactional support.

- Streaming capability provides a near real-time flow of the data stored within QLDB, enabling the development of event-driven workflows for real-time analytics and replicating data to other services.

- Export capabilities to store data in S3.

- QLDB is serverless, so it automatically scales; there are no servers to manage. It uses the pay-as-you-go pricing model.

What we are trying to Solve

We recently had to implement an in-app currency functionality. It added the concept of “coins” to the in-app economy and enabled users to withdraw from the app to the real-world economy. That’s right, in this application, you can buy in-app coins but also convert them back to real money and withdraw them to your bank account.

This scenario deserves the best audit logs we can come up with, and since there isn’t multi-party collaboration, storing these trails in the blockchain seems overkill.

We decided to keep our audit log tables in QLDB to benefit from its inherent immutability, completeness, and verifiability.

Roadmap

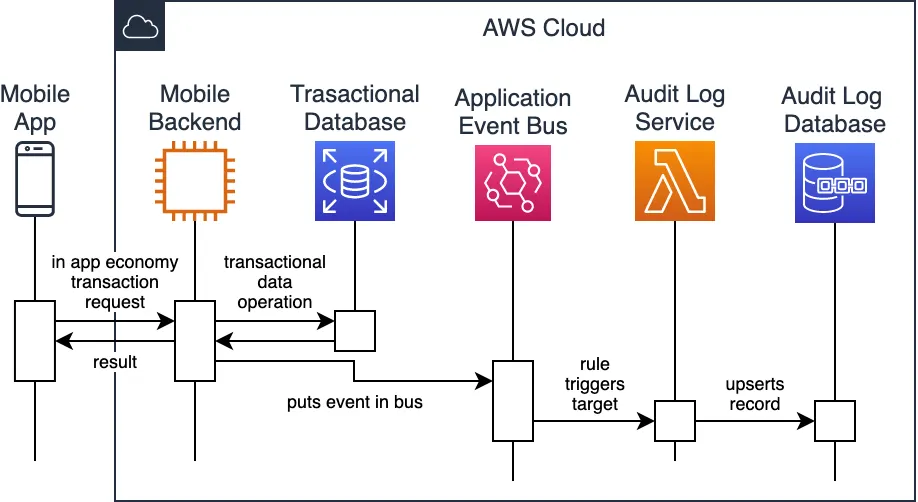

In this project, we have a GraphQL backend that powers the mobile application and a series of microservices that complement the backend updating the transactional data for the app.

We have an event-driven architecture powered by Amazon EventBridge, which allowed us to have minimal coupling between the microservices, resulting in a flexible, powerful, and easy-to-maintain design.

In the spirit of this lightweight and event-driven ecosystem, we want a microservice powered by AWS Lambda to interact with QLDB. Thus, certain events are sent in our custom EventBridge bus triggering the application logic in Lambda.

So, whenever a state change deserves an audit log, we will capture all the data for the transformation of the state, sending an event reflecting that state change.

We store the EventBridge event ids in a metadata field in QLDB for correlation purposes.

Journey

When we started developing the audit logs powered by QLDB, we found that AWS maintains a Node.js driver, and there are many example projects that we used to understand how to work correctly with this database.

“Migrating” to QLBD

There isn’t a built-in migration tool into the Node.js driver. So, we first rolled out our migration mechanism, allowing us to create the necessary tables and indexes in a repeatable way.

“DROP TABLE”? (not really)

Something to keep in mind, though, is that QLDB holds truly immutable data. So, even after rolling a migration that created a table and deleting it, you will still see it in the database (with a deleted state). You can even “undrop” a table, which will recover any record in that table too.

Therefore, in Quantum Ledger is always wise to have a development or sandbox QLDB instance to run experiments, minimizing the need to delete tables or change tables, because on an immutable database, whatever you do, is there for good.

No unique indexes

In QLDB, as in many databases, the created indexes help make the queries faster and avoid full-table scans. However, there is no support for unique indexes. Instead, the database engine manages and assigns an internal unique document identifier for every record, which you can not know until you insert it.

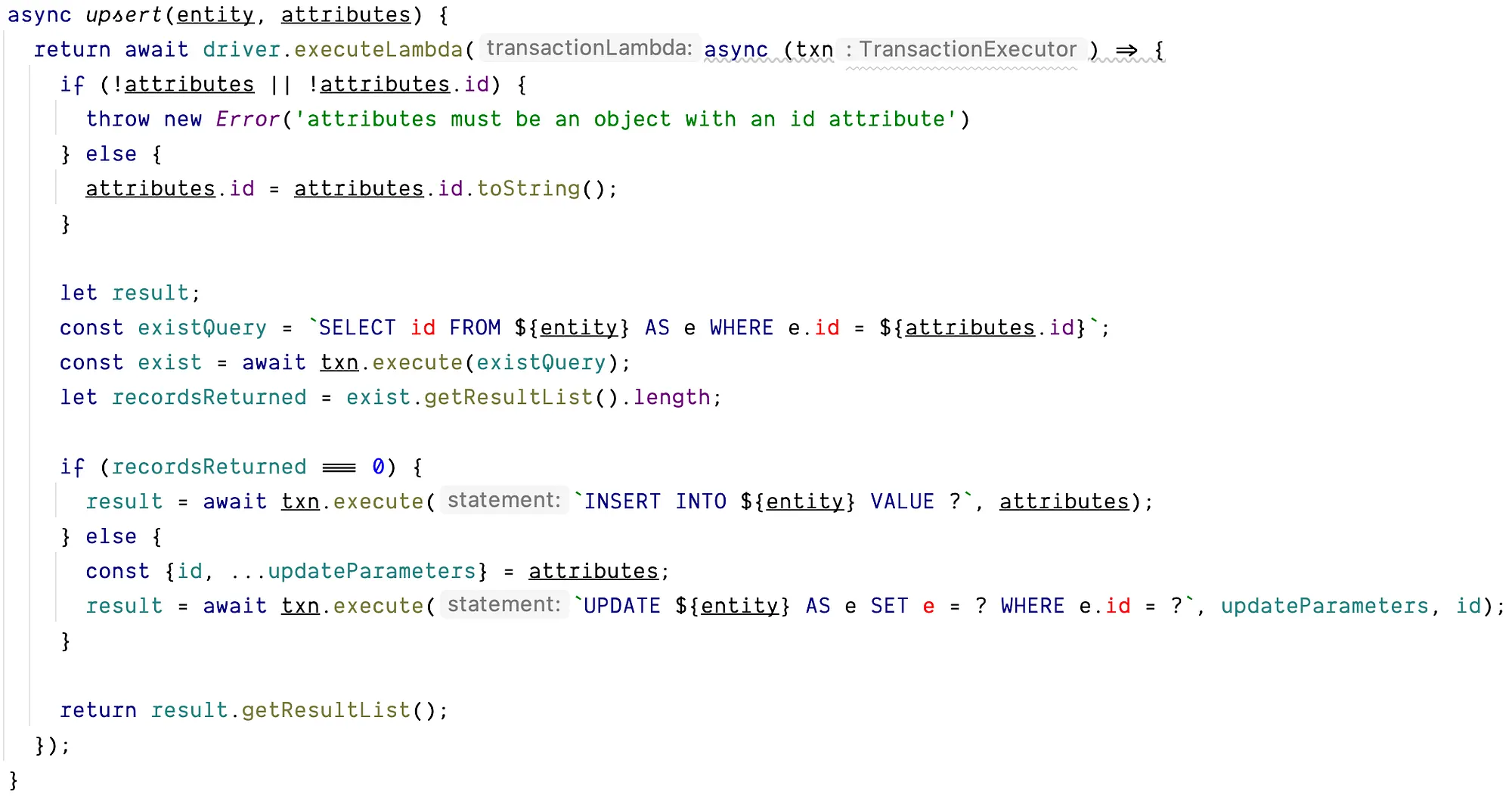

Identifying the records in this way makes the update operations more laborious. You need to implement the “upsert” logic in the application layer. First finding out if the record you want to insert already exists and query its internal identifier. If the record doesn’t exist, insert it in the table, and otherwise, updating its attributes is updated.

Managing translations from JSON to Amazon ION

Another particularity to keep in mind is that Quantum Ledger uses AWS Ion to represent the documents. According to the official documentation, Amazon Ion is a richly-typed, self-describing, hierarchical data serialization format that offers interchangeable binary and text representations. In our first attempt, we tried managing the conversion to ION from the JSON we received from the EventBridge event detail. Unfortunately, this approach turned out to be laborious and error-prone. Fortunately, the Node.js QLDB driver itself does a great job converting JSON to ION for you. When passing the PartiQL statement to the transaction executor, you can replace each argument with a question mark character and then pass the values for them as additional parameters in the execute method call from the transaction executor.

Conclusion

After understanding the caveats described above, it was dead simple to build a secure REST interface powered by Lambda, AWS Cognito, and API Gateway to query and verify the QLDB tables in which we stored the secure audit logs.

Quantum Ledger’s immutability and the familiar SQL interface make it easy to store secure audit logs. Being able to verify them cryptographically allows knowing for sure that a critical audit log in an application hasn’t been tampered with.

On top of that, it is a managed serverless service, freeing you from the need to manage infrastructure and software updates, making it easy to use.

We think, and we do

Do you have an application that requires secure and immutable audit logs for the state changes on your critical business objects?

Talk to us. We can help!